Attribution Models

Marketing Data Trust Issues: The Signal Audit Framework for Reliable Attribution

Every number on your marketing dashboard is lying to you. Some are lying a little. Some are lying a lot. The question isn't whether your data has problems — it's which numbers you can trust enough to make decisions.

Facebook says you got 200 conversions. Google claims 175. TikTok reports 80. Your Shopify backend shows 290 actual orders. The math doesn't add up because it was never designed to. Each platform reports from its own limited perspective, claiming credit for conversions it influenced — even when three other platforms claim credit for the same customer.

This isn't a bug. It's the architecture of digital marketing measurement. And in 2026, with 40-60% of conversion data lost to privacy restrictions, the gap between reported metrics and reality has never been wider.

The marketers who thrive aren't the ones with perfect data. They're the ones who understand which data to trust, when to trust it, and how to reconcile conflicting signals into decisions that actually work.

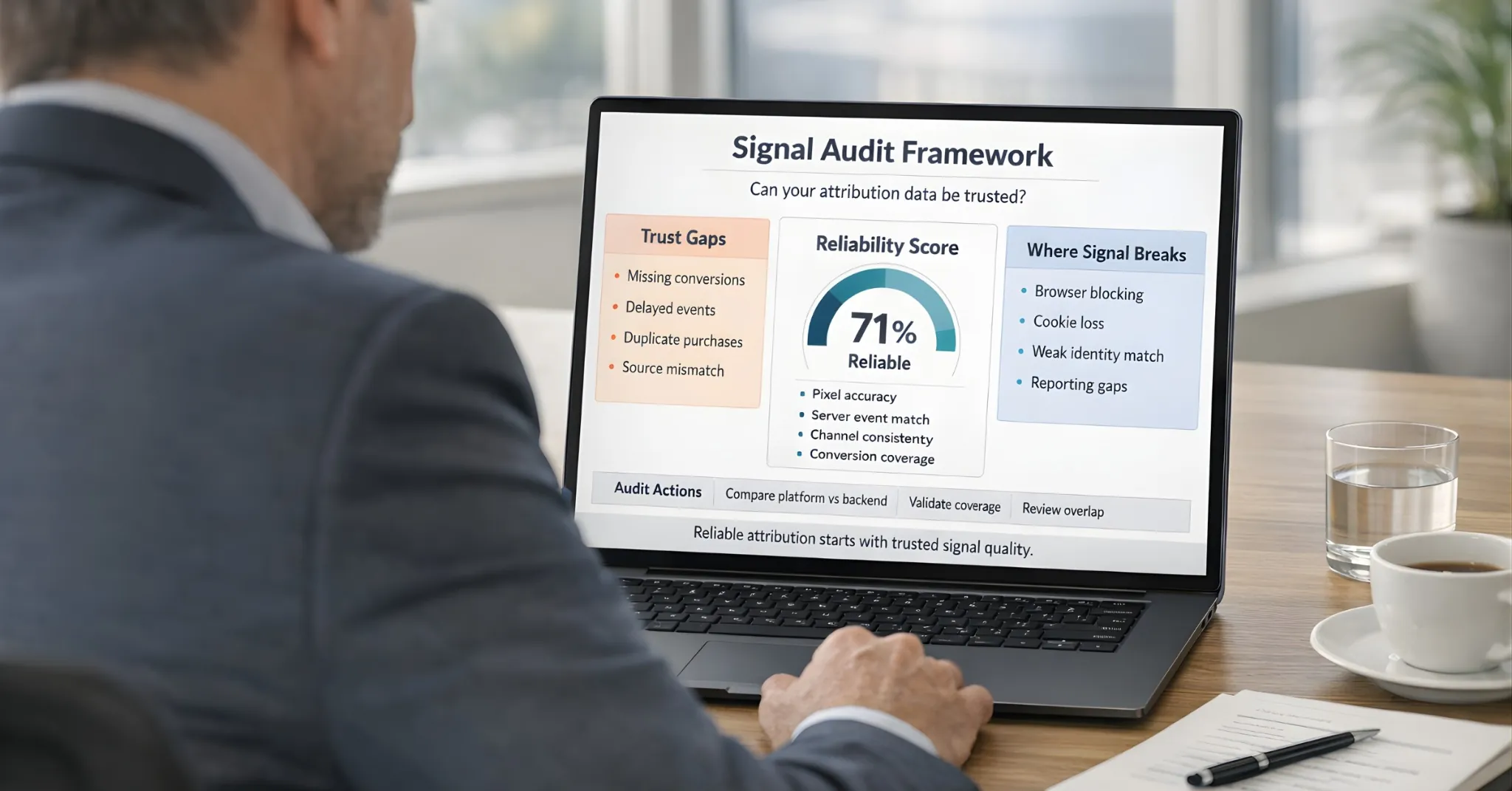

This is the Signal Audit Framework: a systematic approach to identifying which metrics are reliable, which are directional, and which are actively misleading you.

The Data Trust Crisis

Marketing has a trust problem. Not between marketers and leadership — between marketers and their own data.

What Dashboards Show

Platform | Conversions | ROAS |

|---|---|---|

Meta | 200 | 4.2x |

175 | 5.1x | |

TikTok | 80 | 2.8x |

Total Claimed | 455 | — |

What Actually Happened

Metric | Value |

|---|---|

Backend orders | 290 |

Actual ROAS | 2.9x |

The Discrepancy

Platforms claimed 455 conversions. 290 actually happened.

That's 165 "phantom conversions" — 57% over-reporting.

Where did the extra 165 come from?

Double/triple counting (same customer, multiple platforms)

View-through attribution claiming credit for impressions

Modeled conversions filling in for blocked tracking

Attribution windows extending credit beyond actual influence

This isn't an edge case. This is normal. And it creates a cascade of bad decisions: budget goes to the wrong channels, algorithms optimize toward phantom signals, and leadership loses confidence in marketing's ability to measure its own impact.

Why Platforms Over-Report (And Always Will)

Understanding why data becomes unreliable starts with understanding platform incentives. Ad platforms aren't trying to deceive you — but they are trying to look good.

Here's the uncomfortable truth: Asking Meta if Meta ads work is like asking a real estate agent if now is a good time to buy a house. The answer is always "yes" because their paycheck depends on it.

This isn't malicious. It's structural. Every platform has a built-in conflict of interest when reporting on its own effectiveness.

The Conflict of Interest

Platforms are PAID to run your ads. Platforms REPORT on whether those ads worked. They're grading their own homework.

What platforms want:

You to spend more on their platform

You to believe their ads work

You to keep budget allocated to them

How default attribution settings serve this:

Default Setting | What It Does | Platform Benefit |

|---|---|---|

View-through attribution ON | Claims credit for impressions, not just clicks | More conversions claimed |

Extended attribution windows | 7-day click, 1-day view captures more "credit" | More conversions claimed |

Statistical modeling | When tracking fails, models estimate favorably | Gaps filled in their favor |

The result: Each platform reports the MAXIMUM plausible credit for conversions. They're not lying — they're optimizing their reporting for their benefit.

If Meta could see Google's data (and vice versa), they'd report accurately. But each platform only sees its own touchpoints. They report what they can see — and what they can see is biased toward making themselves look effective.

Every platform has a structural incentive to claim credit. None have incentives to underreport. This creates systematic over-attribution across your entire marketing stack.

The Data Trust Hierarchy

Not all data is equally reliable. Understanding which sources to trust — and how much — is the foundation of good decision-making.

Tier | Trust Level | Data Type | Examples | Use For |

|---|---|---|---|---|

Tier 1 | Highest | Backend transaction data | Shopify orders, Stripe payments, CRM closed deals | Final validation of all marketing claims |

Tier 2 | High | First-party behavioral | Logged-in user behavior, email-matched journeys | Understanding customer journeys, attribution modeling |

Tier 3 | Medium | Server-side platform data | Meta CAPI (deduplicated), Google Enhanced Conversions | Campaign optimization, directional performance signals |

Tier 4 | Lower | Browser-based platform data | Meta Pixel alone, Google Ads tag without enhancement | Rough directional signals only |

Tier 5 | Lowest | Engagement metrics | Ad impressions, social engagement, email opens | Diagnostic signals, not performance measurement |

The principle: Always reconcile lower-tier data against higher-tier sources. Never let Tier 4-5 data override what Tier 1-2 shows.

The mistake most marketers make: treating all data as equally trustworthy. Platform-reported ROAS gets the same weight as backend revenue. Engagement metrics influence budget decisions. The hierarchy collapses, and decisions become unreliable.

The Seven Metrics That Lie Most Often

Some metrics are structurally prone to unreliability. Know which ones to scrutinize most heavily.

1. Platform-Reported Conversions

Every platform claims its own version of truth. Without reconciliation against your backend, you're aggregating lies.

The multi-touch problem:

Customer sees TikTok ad → Clicks Meta ad → Searches on Google → Buys

Platform | What They Report |

|---|---|

TikTok | 1 view-through conversion |

Meta | 1 click-through conversion |

1 click-through conversion | |

Total Claimed | 3 conversions |

Actual Conversions | 1 |

The fix:

Compare total platform conversions to backend orders

Calculate your "over-reporting ratio"

Apply that ratio when evaluating platform claims

2. View-Through Conversions

View-through attribution gives credit for conversions that happened after someone saw (but didn't click) an ad. The problem: they might have converted anyway.

How view-through works: Customer sees your ad (doesn't click) → Later converts → Platform claims credit

Why it's often misleading:

Customer might have converted regardless of seeing the ad

"Seeing" an ad doesn't mean noticing or remembering it

Platforms default to including view-through in totals

The test: Compare "Total Conversions" to "Click-Through Conversions" in your platform. If view-through is 2-3x click-through, that metric is largely noise.

The fix:

Evaluate campaigns on click-through conversions

View-through is directional at best

Run incrementality tests to validate view-through claims

3. ROAS (Without Reconciliation)

ROAS looks like the ultimate metric. But it's only as accurate as the revenue attribution feeding it.

What ROAS should measure: Revenue Generated ÷ Ad Spend = Return on Ad Spend

What ROAS actually measures: Revenue THE PLATFORM CLAIMS ÷ Ad Spend = Platform-Reported ROAS

The gap:

Metric | Value |

|---|---|

Platform-Reported ROAS | 4.5x |

Actual ROAS (backend) | 2.8x |

You think you're profitable at $50 CPA. You're actually losing money at $80 CPA.

The fix: Calculate TRUE ROAS:

True ROAS = Backend Revenue from Channel ÷ Channel Spend

Requires connecting ad spend to actual revenue, not platform claims.

The MER Alternative: Your "North Star" Metric

For ecommerce founders tired of platform lies, there's a simpler metric that bypasses attribution entirely: Marketing Efficiency Ratio (MER).

MER = Total Revenue ÷ Total Ad Spend

Example:

Total Revenue (Shopify): $500,000

Total Ad Spend (all platforms): $100,000

MER = $500,000 ÷ $100,000 = 5.0

For every $1 spent on ads, you generated $5 in revenue.

Why MER is more trustworthy:

Uses Tier 1 data (backend revenue) — the source of truth

Doesn't rely on platform attribution claims

Can't be gamed by platform reporting settings

Reflects actual business performance

The limitation: MER doesn't tell you WHICH channels work. It tells you if your overall marketing is efficient. Use MER as your "North Star" health metric. Use channel-level attribution for optimization decisions.

When MER and platform ROAS diverge: If platforms claim 5x ROAS but your MER is 2.5x, platforms are over-claiming by roughly 50%. Trust the MER. Question the platforms.

4. Cost Per Lead (Without Quality)

A cheap lead that never converts is infinitely more expensive than an expensive lead that becomes a customer.

The illusion:

Campaign | Cost Per Lead |

|---|---|

Campaign A | $15/lead |

Campaign B | $45/lead |

"Campaign A is 3x more efficient!"

The reality:

Campaign | CPL | Close Rate | Cost Per Customer |

|---|---|---|---|

Campaign A | $15/lead | 2% | $750/customer |

Campaign B | $45/lead | 20% | $225/customer |

Campaign B is actually 3x more efficient.

The fix: Track leads through to revenue. Calculate Cost Per CUSTOMER, not Cost Per Lead.

5. Last-Click Attribution

Last-click gives 100% credit to the final touchpoint before conversion. This systematically undervalues every channel that isn't capturing demand at the moment of purchase.

The journey:

Customer discovers brand via TikTok ad

Customer researches on Google (organic)

Customer clicks retargeting ad on Instagram

Customer returns via branded Google search → Converts

Last-click attribution:

Channel | Credit |

|---|---|

TikTok | 0% |

Organic | 0% |

0% | |

Google Brand Search | 100% |

Reality: TikTok created the awareness. Without it, there's no customer to "capture" via search.

The fix: Use multi-touch attribution. At minimum, compare first-touch vs. last-touch to see the gap.

6. Engagement Metrics (As Performance Indicators)

Likes, shares, comments, and video views feel like success. But they don't correlate reliably with revenue.

What engagement tells you:

People noticed your content

Some found it interesting enough to interact

Your creative might be resonating

What engagement doesn't tell you:

Whether those people will buy

Whether they're even your target customer

Whether the attention converts to revenue

The danger: High engagement, low conversion = Content that entertains but doesn't sell. Optimizing for engagement can actively hurt performance by attracting the wrong audience.

The fix: Use engagement as a diagnostic, not a KPI. Primary metrics should connect to revenue.

7. Modeled/Estimated Conversions

When platforms can't track directly (iOS opt-outs, cookie blocks), they estimate using statistical models. These estimates are educated guesses, not measurements.

What's happening: ~75-85% of iOS users opt out of tracking. Platforms can't directly measure these conversions. They use models to "fill in" the gaps.

How modeling works: Platform observes conversions from trackable users. Applies patterns to estimate conversions from untrackable users. Reports total = Measured + Estimated.

The reliability issue: Models assume untrackable users behave like trackable users. This assumption may or may not be accurate. You can't verify modeled conversions against reality.

The fix:

Understand what percentage of your conversions are modeled

Treat modeled data as directional, not precise

Validate against backend data

The Signal Audit Framework

Here's the practical process for auditing your marketing data and establishing what you can trust.

Step 1: Establish Your Source of Truth

Define what "a conversion" means in your backend system. Pull actual orders/revenue for the audit period. This is the number everything else reconciles against.

Step 2: Calculate Platform Over-Reporting Ratio

Over-Reporting Ratio = Total Platform-Claimed Conversions ÷ Backend Conversions

Example: Platforms claim 455 conversions. Backend shows 290 orders. Ratio = 455 ÷ 290 = 1.57 (57% over-reporting)

Step 3: Calculate Tracking Accuracy Per Platform

For each platform, compare claimed conversions to attributed backend orders.

Tracking Accuracy = (Platform-Attributed Backend Orders ÷ Platform-Claimed Conversions) × 100

Example: Meta claims 200 conversions. Backend shows 120 orders from Meta traffic. Accuracy = (120 ÷ 200) × 100 = 60%

Step 4: Identify Highest-Discrepancy Channels

Which platforms have the biggest gap between claims and reality? Those are your least reliable data sources. Treat their reported metrics with appropriate skepticism.

Step 5: Establish Adjusted Expectations

When a platform reports 100 conversions at 60% accuracy, expect ~60 actual conversions. Adjust ROAS and CPA calculations accordingly.

The Reconciliation Habit

Data trust isn't a one-time audit. It's an ongoing practice.

Weekly Checklist

Total platform-reported conversions vs. backend orders — Is the over-reporting ratio stable? Changing?

Click-through vs. view-through breakdown — Is view-through inflating your totals?

Platform ROAS vs. True ROAS — How big is the gap?

Top campaigns by platform vs. top campaigns by backend revenue — Do the rankings match?

Any major discrepancies appearing? — New patterns that need investigation?

Monthly Checklist

Tracking accuracy by platform (recalculate)

Lead-to-customer conversion rates by source

Customer quality metrics by acquisition channel

Attribution model impact (compare models)

Quarterly Checklist

Full signal audit (complete process)

Platform attribution settings (any defaults changed?)

Tracking infrastructure health

Data quality across entire stack

The 40-60% Problem

Here's the uncomfortable truth: in the Post-Cookie Era, 40-60% of your conversion data likely never reaches ad platforms at all.

Where Signal Gets Lost

Signal Blocker | Impact |

|---|---|

iOS App Tracking Transparency | 75-85% opt-out rate |

Safari/Firefox | Third-party cookies blocked |

Ad blockers | ~30-40% of desktop users |

Cross-device journeys | Connections broken between devices |

Consent management | GDPR/CCPA opt-outs |

The Compound Effect

These aren't independent. They stack. A customer on Safari with an ad blocker who opts out of tracking is essentially invisible to your pixel-based tracking.

What This Means for Your Data

The conversions you CAN track aren't a random sample. They're systematically biased toward:

Android users

Chrome users without ad blockers

Users who consent to tracking

Single-device journeys

Your "data" represents a specific slice of customers, not your full customer base.

The Fix

Server-side tracking recovers much of this lost signal. First-party data with identity resolution fills more gaps. But you'll never have 100% visibility.

Accept imperfection. Design for it.

The Pro Level: From Attribution to Incrementality

Here's the question that separates good marketers from great ones: "Would this sale have happened if I turned this ad off?"

Attribution asks: "Who gets credit?" Incrementality asks: "Did this ad actually cause the sale?"

Attribution vs. Incrementality

Approach | Question | Answer | Limitation |

|---|---|---|---|

Attribution | "Which touchpoint should get credit?" | Depends on your model (last-click, first-click, multi-touch) | All models are somewhat arbitrary |

Incrementality | "Would this customer have converted WITHOUT this ad?" | Requires testing (holdout groups, geo-tests) | Measures TRUE causal impact |

The difference: Attribution tells you who touched the ball last. Incrementality tells you if the game would have been won anyway.

Example

Brand search ads show 5x ROAS in your attribution reports.

Attribution says: "Brand search is your best channel!"

Incrementality test reveals: 80% of those customers would have found you organically anyway. True incremental ROAS is 1.2x.

You were paying to capture demand that was already yours.

How to Test Incrementality

Holdout tests: Turn off ads for a random segment, measure impact

Geo tests: Turn off ads in specific regions, compare to control

Conversion lift studies: Platform-run tests (Meta, Google offer these)

When to Use Incrementality

Before scaling a channel significantly

When platform ROAS seems "too good to be true"

For brand/retargeting campaigns (highest risk of non-incremental spend)

When MER doesn't improve despite "successful" campaigns

Incrementality testing is the ultimate data trust level. It doesn't rely on attribution models or platform claims. It measures actual causal impact through controlled experiments.

Building Trust Into Your Data Infrastructure

The goal isn't perfect data — it's data you understand well enough to trust appropriately.

The Trustworthy Data Stack

Layer | Component | What to Do |

|---|---|---|

Layer 1 | Backend as Source of Truth | All marketing metrics ultimately reconcile against actual revenue. Non-negotiable. |

Layer 2 | First-Party Data Collection | Capture customer identifiers (email, phone) at key touchpoints. Build your private identity graph. Own the data. |

Layer 3 | Server-Side Tracking | Implement CAPI (Meta), Enhanced Conversions (Google). Send conversion data server-to-server. Bypass browser restrictions. |

Layer 4 | Deduplication | When running both pixel and server-side, deduplicate events. Prevent double-counting. |

Layer 5 | Multi-Touch Attribution | Move beyond last-click. Understand the full customer journey. Credit channels appropriately. |

Layer 6 | Regular Reconciliation | Weekly/monthly audits comparing platform data to backend. Maintain awareness of discrepancies. |

The Decision Framework

When your data has known reliability issues, how do you make decisions?

Principle 1: Tier Your Confidence

Confidence Level | Data Basis | Action |

|---|---|---|

High | Backend data supports | Act decisively |

Medium | Multiple platform sources agree | Proceed with monitoring |

Low | Single platform, no backend validation | Investigate before acting |

Scale your action magnitude to your confidence level.

Principle 2: Look for Convergence

When multiple independent data sources point the same direction, confidence increases.

If Meta, Google, AND backend all say Campaign X works → Act

If only Meta says it works while backend disagrees → Investigate

Principle 3: Use Relative, Not Absolute

Even unreliable data can show relative differences. If Campaign A shows 200 conversions and Campaign B shows 50, A is probably better than B — even if absolute numbers are off.

Compare within the same platform using the same metrics.

Principle 4: Validate Big Decisions

Before scaling spend significantly, validate with:

Backend revenue data

Incrementality test (if possible)

Holdout analysis

Never scale based solely on platform-reported data.

Principle 5: Document Your Assumptions

What did you assume about data accuracy? What would change if those assumptions were wrong?

This lets you revisit decisions when new information emerges.

Common Mistakes to Avoid

Critical Mistakes (Highest Cost)

Trusting platform ROAS without reconciliation — Platform ROAS is self-reported by entities with incentive to over-claim. Always validate against backend revenue.

Summing platform conversions — Adding up conversions across platforms guarantees over-counting. Total conversions = backend orders, not platform sum.

Making big budget decisions on low-tier data — Scaling spend based solely on pixel data is gambling. Validate with backend before significant increases.

High-Cost Mistakes

Treating modeled data as measured — Estimated conversions are educated guesses, not facts. Treat them with appropriate uncertainty.

Ignoring view-through inflation — Platforms default to including view-through. This can double your reported conversions without real impact.

Optimizing for engagement metrics — Likes and clicks don't pay bills. Optimize for revenue-connected metrics.

Moderate-Cost Mistakes

Last-click only attribution

Ignoring cross-device journey gaps

Inconsistent conversion definitions across platforms

Auditing data only when problems surface

The Bottom Line

Your marketing data is unreliable. This isn't a fixable bug — it's a permanent feature of the landscape. Platforms have incentives to over-report. Privacy restrictions create gaps. Cross-device journeys fragment visibility. Attribution models simplify complex realities.

The marketers who succeed aren't the ones waiting for perfect data. They're the ones who:

Understand the Data Trust Hierarchy — Know which sources to trust for which decisions.

Reconcile regularly — Compare platform claims against backend reality weekly.

Calculate their own accuracy ratios — Know how much each platform over-reports.

Use relative comparisons — Even flawed data shows relative performance.

Validate big decisions — Never scale based solely on platform-reported metrics.

Build better infrastructure — Server-side tracking, first-party data, multi-touch attribution.

The question isn't whether your data is perfect. It's whether you understand its imperfections well enough to make good decisions anyway.

Trust your backend. Question your platforms. Reconcile everything. That's how you turn unreliable marketing metrics into reliable business decisions.

Get Started

Start Tracking Every Sale Today

Join 1,389+ e-commerce stores. Set up in 5 minutes, see results in days.